The Conversation Before the Conversation

What an AI scribe moment demonstrates about what we're asking of health workers

Kia ora e te whānau,

Alicia McKay shared a post this week that I haven’t been able to stop thinking about.

She took her daughter to the GP. The nurse practitioner introduced an AI notetaking tool using a “funny little kiddie voice” - meet Heidi, my little helper. A second screen displayed previous patient consultations. When Alicia raised privacy concerns, she was reassured that the session was “unidentifiable” as long as nobody said a name or date of birth.

When she asked where the data was stored, the practitioner paused. “Yes, it’s a good question. We don’t know.”

I jumped into the comments. Practitioners are supposed to gain consent every time they use a tool like this. Someone pushed back - are they though? Is that actually required? The thread was so rich, with people’s experiences. Turns out, it depends who you ask.

You can read Alicia’s original post and the conversation it sparked here

That uncertainty in the comments told me something important. And it wasn’t about the technology.

The problem isn’t Heidi

AI scribes like Heidi are just one part of a much larger picture. Across our health system, technology is being used at every level - some of it sitting right in the room with patients, some of it running quietly in the background, nowhere near a clinical encounter.

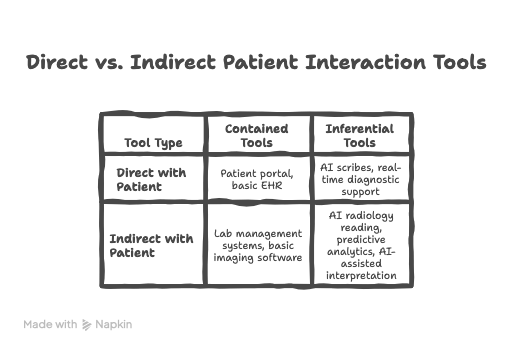

It helps me to think about it in four quadrants.

On one axis: whether the tool is used directly with patients or sits further back in the system - think radiologists using AI-assisted image review, or laboratory scientists working with predictive analytics. On the other axis: whether a tool is essentially contained (it stores and transmits information, but doesn’t interpret or generate) versus inferential (it actively reasons, connects to external systems, generates output, learns).

What this grid helps me think about is how the consent and transparency obligation don’t disappear as you move further from the patient. They transform. In the direct/inferential quadrant, the practitioner needs to be able to explain what the tool does and invite a genuine response - in the moment, under time pressure, possibly with a child in the room. In the indirect/inferential quadrant, somebody still had to decide whether patients know their scan was read by an algorithm. Who made that call? Do the staff interacting directly with the patient know what to say if asked?

Whose job is it to prepare them?

Here’s the question I keep coming back to. Not “did the nurse practitioner do the right thing?” but “who prepared her to?”

Was she uncomfortable using Heidi and hoping to ease past it? Nervous that a patient might say no and unsure how to manage that? Unclear on how the tool actually worked, or what “unidentifiable” really meant in practice? Possibly all three.

We are rolling out inferential tools across our health system, and in many cases, practitioners are sourcing their own, while assuming that the people using them have the literacy to use them safely, explain them clearly, and navigate consent with confidence. We assume they know which tools connect to the internet and which don’t. We assume they understand what it means for data to be “stored offshore.” We assume they can field a question from a patient they weren’t expecting.

Where did that capability come from? Was it taught? Or assumed?

The health workforce is being asked to have an increasingly complex conversation with patients on behalf of systems and vendors they may not fully understand themselves. That is a significant ask. And it falls hardest on the practitioner in the room, the one with thirty seconds before the next patient, the one whose name is on the notes.

Same failure, different era

Here’s the thing. When Alicia left that appointment, she needed to update their address. The receptionist wasn’t logged in on that computer. It was “sort of tricky.” So she handed over a post-it note and went out the back.

They waited. She didn’t come back. They left their address on a sticky note at an unmanned front desk.

No AI involved. No inferential tools, no data sovereignty questions, no algorithms. Just an address on a piece of paper, left where anyone could see it.

The same practice. The same day. The same assumption - that the person in the role would know how to handle the situation safely, without anyone having specifically prepared them to do so.

That’s not a technology problem. It never was.

Ngā mihi nui, Jane

Kia ora, ko Jane tēnei | I'm Jane 👋🏼

🧩I bring health together - helping rural health system leaders build inclusive recruitment and retention strategies that acknowledge barriers while actively dismantling them so every profession has a voice at the table

🧩 I talk about #ruralhealth #alliedhealth #equity #socialjustice because #HealthWorksBetterTogether

🧩 Want more like this but bite sized? Check out my LinkedIn content https://www.linkedin.com/in/janegeorgenz/